Software developers are on an ever-ongoing mission to increase their efficiency. This is what cloud computing made so popular in the first place: bringing down cost the cost of change by building abstractions over shared resources.

There is an important precondition for sharing resources in the public cloud, of course, and that is to provide good-enough isolation between tenants of a shared system. From the perspective of an individual customer, this means that it seems like the whole infrastructure belongs solely to them. Data security, privacy, and resource dependability all drive this need.

Much like some people call out the “Third Wave of Cloud Computing”, the cloud’s underlying virtualization technology is exploring its third iteration, too. The first step was Virtual Machines, allowing us to share the same physical hardware between multiple tenants, each running their own independent operating system. The second step was enabled by what’s commonly known as Docker containers. This made it possible to share a single operating system more or less securely. More importantly, containers are very neat deployment units to ship applications. Kubernetes allows us to run distributed clusters of virtual machines, each of them running containers that run our apps reliably.

WebAssembly is a new development in software isolation & deployment technologies. Technically speaking, it is an open standard for cross-platform executables based on a stack-level virtual machine. Wasm programs are packaged in self-contained Wasm modules. In essence, WebAssembly (Wasm) combines the runtime performance of native programs (low CPU and memory overhead) in a tightly sandboxed (secure) environment. Wasm support in web browsers is already great, and adoption in the Cloud Native ecosystem is gaining traction.

I formulate my hypotheses for headless Wasm use cases based upon the assumption that Wasm runtimes provide proper resource and data isolation between modules, making it feasible to run Wasm modules side-by-side for multiple tenants in a public cloud environment. Moreover, the hypothesis is based upon certain key trends in software engineering in the last decades.

My two hypotheses are:

- With the advent of lightweight & secure code isolation, we will see a trend towards user-provided logic as a means to augment existing web applications by their users. This can be used to customize the application’s user interface or business logic and to improve integration with external systems.

- In web application development, there will be a trend towards more system abstraction. This is much like web frameworks such as Ruby on Rails greatly reduced the effort of building custom web apps by providing a shared understanding for commonly used features like templating, database persistence, and email submission. The next iteration will focus on patterns that align business logic with distributed systems. This makes business logic move closer to databases, message brokers, etc. Wasm can do this in a more productive and user-friendly fashion than prior, mostly unpopular, database programming languages like PL/SQL. These technologies mostly failed because of their poor developer experience and lack of flexibility.

In the following paragraphs, I’ll describe each of the hypotheses in more detail, present use cases, and compare the trends to similar technologies. I’ll quickly describe and link to existing projects wherever they already exist.

1. User-provided Logic

This term is inspired by the trend that made Web 2.0 popular: User-generated Content. Technology experts could always contribute to the web, but the web only got popular once it could support its users with rich user interfaces and applications connected to their daily lives, such as social networks. User-generated Content summarizes this transition from the read-only to the read-write web.

The concepts I describe in this paragraph have been introduced by Connor Hicks in a talk about Extensible Cloud-Native Application with WebAssembly. Similar concepts may also exist elsewhere already.

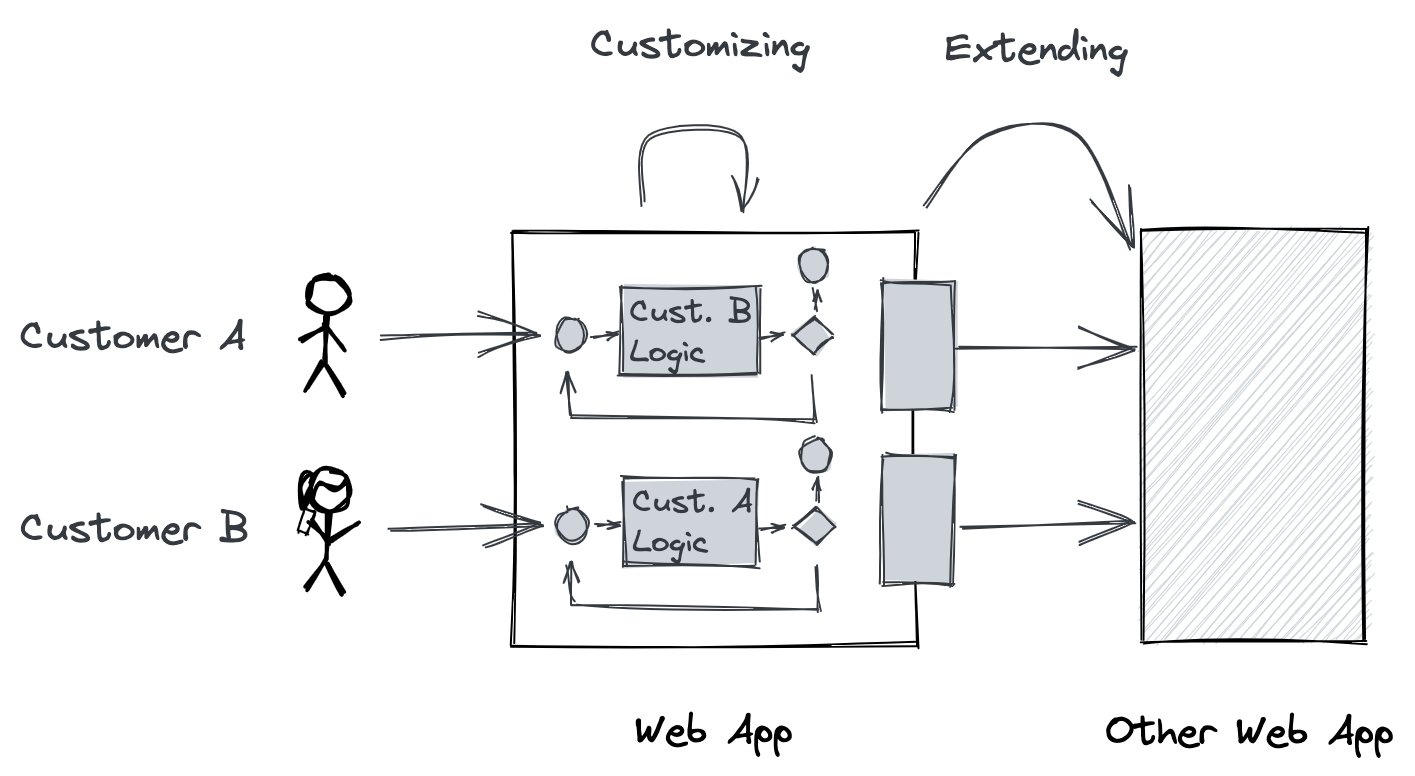

Customizability

Most web apps are customizable to a certain degree by their end-users. For example, many web apps now offer their users the choice between a light and a dark color theme. This is done to account for custom needs, compliance, etc. Some web apps are customizable to the point where it’s possible to change the flow of data within the application. As an example, GitHub allows its users to change the platform behavior with GitHub Actions, e.g. to add custom labels to Pull Requests based on user-defined conditions. I predict that more and more web apps will offer this level of customizability, and Wasm is a very good technological fit because of its versatility and security. Given a well-defined interface, users encode their logic into a Wasm module and the web app would run the module whenever necessary and act accordingly.

This is by no means a binary transition, but existing web apps can rather be transformed gradually. As a first step, the existing preferences can be transformed to code without even changing the UI. Then, certain key customer groups with special requirements are given permission to contribute. The feature is enabled for the “ordinary end-user” last and, most importantly, those users are provided with a fitting means of developing such modules. Easily accessible software development is not in scope for this article, but inspiration can be taken from graphical programming platforms (like Scratch), Low/No-Code Platforms, and existing rule engines like IFTTT or Zapier.

💡 Apps with Wasm-based customization features

Shopify Scripts allows store owners to provide snippets of code for personalized experiences in an online shop. They seem to work on a JavaScript-to-WebAssembly toolchain.

Certain infrastructure projects have adopted similar features: Envoy is a proxy that supports Wasm-based filters, Kubewarden policies are supplied as Wasm modules (see this talk), and Istio is a service mesh for Kubernetes that now runs Wasm plugins.

Worth mentioning but not directly related: Microsoft Flight Simulator supports Wasm-based plugins only and the Amazon Prime Video app uses Wasm under the hood.

Extensibility

In most cases, web apps deliver their value in an application network (as “microservices”) rather than alone. For example, the value of a social network depends on its users’ ability to share web content outside of it. Extensibility describes this need: connecting a web application with external APIs and services.

Web apps usually provide hook points in the app’s control flow for creating workflows with the data processed by the app. WebHooks are a popular mechanism to send events between web apps. With open standards like OAuth2, web apps can be securely integrated with one another. WebHooks are so popular because they’re so easy to use. It is as simple as configuring an HTTP endpoint in the sending web app and reacting to requests on the receiving end. But the downsides become obvious at a closer look: performance & response times vary because each separate request is routed over the internet, it is hard to implement the receiving end with sufficient dependability, and there is no common standard to validate the identity of the sending and receiving end.

Sometimes web apps have native integrations, which means both ends agreed on a contract for a shared API, but this has the same limitations as I earlier showed for customizing web app features.

2. High-Level System Abstractions

Higher-level abstractions enable higher development productivity and lower the cost of maintenance. The reason why high-level web frameworks like Ruby on Rails, Django, or Laravel have become so popular for custom web apps is that they provide a level of abstraction that boosts productivity without limiting versatility too much. They provide a default implementation for commonly used features like HTML templates, Object-Relational Mapping to a SQL database, or E-Mail sending.

The operational side of web apps built with these frameworks is still very much undefined. They all come with an HTTP application server and the current best practice is to distribute this HTTP application server in a Linux container image and run it on a container scheduler (like Kubernetes) or on a PaaS (like Heroku).

For this operational side, I see two distinct tasks. The first is to relieve the web app developer from operational infrastructure duties – making sure the containers run properly, taking care of load balancing, graceful shutdowns, etc. The second is to introduce an operational model that is fitted to tasks not related to an HTTP request, onto which the web frameworks obviously put their focus. This includes tasks like background processing, processing data in batches or streams, message brokers, and workflows.

Tackling this issue calls for a more generic layer of abstraction to containers to provide a better developer experience. This abstraction layer handles the nitty details, for example how to reliably subscribe to a queue of events and execute a piece of business logic for every message.

I’m aware that this is not an entirely new idea. Database programming languages like PL/SQL could achieve roughly similar benefits in the past but a developer-friendly and widely adopted toolchain never emerged.

Interesting system categories for such abstraction frameworks include:

- Batch Processing Systems

A batch processing system runs a processing job on a large amount of input data and produces some output data. These jobs often take some time (minutes to days) and may run periodically [1].

A Wasm-based batch processing system abstracts the particularities of a processing job from a specific framework or programming language to enable better interoperability between jobs in a pipeline. State-of-the-art batch processors like Apache Spark rely on the jobs to be implemented in a restricted set of languages like Java, Scala, or Python.

- Stream Processing Systems

A stream processing system processes data in near-real-time and its use cases are between systems for business transaction processing (OLTP) and analytical use cases (OLAP). Like a batch processor, an input is consumed and an output is produced. The difference is that a stream processor handles events shortly after they occur, whereas batch processing operates on a fixed dataset [1].

A Wasm-based stream processing system provides a common interface for creating stream operators in an arbitrary Wasm-compatible programming language. Just-in-time compilation and bytecode optimization is a hot research topic for high-performance stream processing which can also be made more generic using Wasm.

💡Fluvio builds a data streaming platform with operators as Wasm modules. The RedPanda Kafka Streaming Platform supports inline data transformations provided as Wasm modules.

- Message Queuing Systems

In a message queuing system, messages are sent to named queues or topics. The message broker (the software that provides the system) is responsible to ensure that messages are delivered to one or more consumers or subscribers on a topic. There may potentially be many consumers on the same topic [1].

A Wasm-based framework remedies message queuing system users from operating their own infrastructure to react to messages. Instead, message processing logic can be provided in a Wasm module and the queuing system distributes this work accordingly.

💡Atmo by Suborbital is a Cloud-Native Wasm application framework, which can be used in a streaming/subscription-oriented context.

- Workflow Systems

A workflow models a complex process in which the process is decomposed into discrete, sequential, and potentially dependent operations (workflow tasks). Workflows often have heterogeneous components, where they are implemented in different programming languages and may have a first-party or third-party origin.

A Wasm-based framework replaces containers with Wasm modules as the runtime for workflow tasks. This provides better performance and enables workflow system users to confidently and securely utilize third-party components.

❗This is the topic of my master thesis, which you can read more about in a separate series of articles. You can now also try WebAssembly in the context of Argo Workflows with my plugin.

- Distributed Actor Frameworks

The actor model is a programming model that deals with concurrent operations in a distributed system. Logic is encapsulated in actors, each of which represents one entity. An actor communicates with another actor exclusively by sending and receiving asynchronous messages (message passing) [1].

In a distributed, Wasm-based actor system, each actor is implemented as a Wasm module. The framework provides methods to invoke a module for a received message and a means to send messages to other actors in the system.

💡Wasmcloud is a Wasm-based distributed actor system.

- Serverless / Function-as-a-Service (FaaS)

The terms serverless and FaaS loosely describe a cloud-native platform for short-running, stateless computation which scales up and down instantly and automatically. Its users are charged for actual usage (function runtime) on a millisecond-level [2].

Wasm-based serverless runtimes can improve the runtime characteristics of such systems by tackling their most prominent drawbacks: cold-start delay and resource usage when not in use. These occur because the serverless runtime starts containers on-demand and leaves them running between requests to optimize request latency and throughput.

💡wagi implements CGI for Wasm modules so that they can be used as HTTP handlers. Hippo is a Platform-as-a-Service tool to deploy Wasm-based applications. Atmo by Suborbital is an application-framework for WebAssembly in the Cloud-Native ecosystem.

Conclusion

I’m excited to see new view on web applications come to life. The Cloud-Native community will most certainly play an important role in developing frameworks and tools to make application development and deployment easier, faster, and more reliable.

Please reach out to me if you’re interested in building systems with headless WebAssembly or running WebAssembly on Kubernetes. You can reach me best on Twitter @sh4rk or on my LinkedIn profile.

References

[1] Kleppmann, Martin (2017): Designing Data-Intensive Applications: The Big Ideas Behind Reliable, Scalable, and Maintainable Systems. O'Reilly Media, Inc.

[2] Fox, G. C., Ishakian, V., Muthusamy, V., & Slominski, A. (2017). Status of serverless computing and function-as-a-service (faas) in industry and research. arXiv preprint arXiv:1708.08028.